Agents Keep Fighting Over My CPU

Local agents and shared resources

I prefer running two or three AI agents locally rather than offloading to remote agents. The feedback loop is faster, I can see what's happening, use the tools I like, and context switching between tasks is easier when everything is on my machine.

My typical setup is one agent on a larger task, a feature or refactor, and one or two others on smaller things like a UI tweak or bug investigation.

As an Android dev, there’s an obvious downside. My laptop takes a beating when running multiple gradle tasks.

When both agents decide to run Gradle at the same time, my machine grinds to a halt. One instance of Gradle is already resource intensive and running multiple builds at the same time makes them compete for resources. A build that normally takes 3 minutes stretches to 15. The fans spin up. Everything freezes. And if they're both trying to deploy to the same emulator, you get task clashing on top of it.

The agents don't know about each other. They can't coordinate.

The Workarounds

There are a few options here, and none of them are great.

You could run multiple emulators so each agent has its own target. Now you're burning even more resources on a machine that's already struggling and we didn’t solve the multiple expensive task problem.

So you could manually sequence the tasks yourself. Wait for Agent A to finish its build before letting Agent B run. But now you're babysitting the agents instead of doing your own work. The whole point of running multiple agents is to get more done in parallel, not to become a human task scheduler.

Either way, you're giving up something, time, resources, or attention.

What I Tried First

I built a CLI wrapper. The idea was simply wrap the command and the calling context and they go into a First In First Out (FIFO) queue. One runs at a time, the rest wait.

queue ./gradlew build

Technically it worked, but there was a problem I didn't anticipate.

AI coding tools have shell timeouts.

Claude Code gives you about 2 minutes by default. Cursor hard-codes 30 seconds. If your command is waiting in queue when the timeout hits, it gets killed.

Then the agents “be smart” and try to run the command without calling into the queue mechanism defeating the whole purpose.

I tried extending the timeouts with environment variables. That helped for Claude, but Cursor's limit isn't configurable. Even with longer timeouts, the issue of waiting compounds because we realistically only want timeouts for execution time, not waiting time.

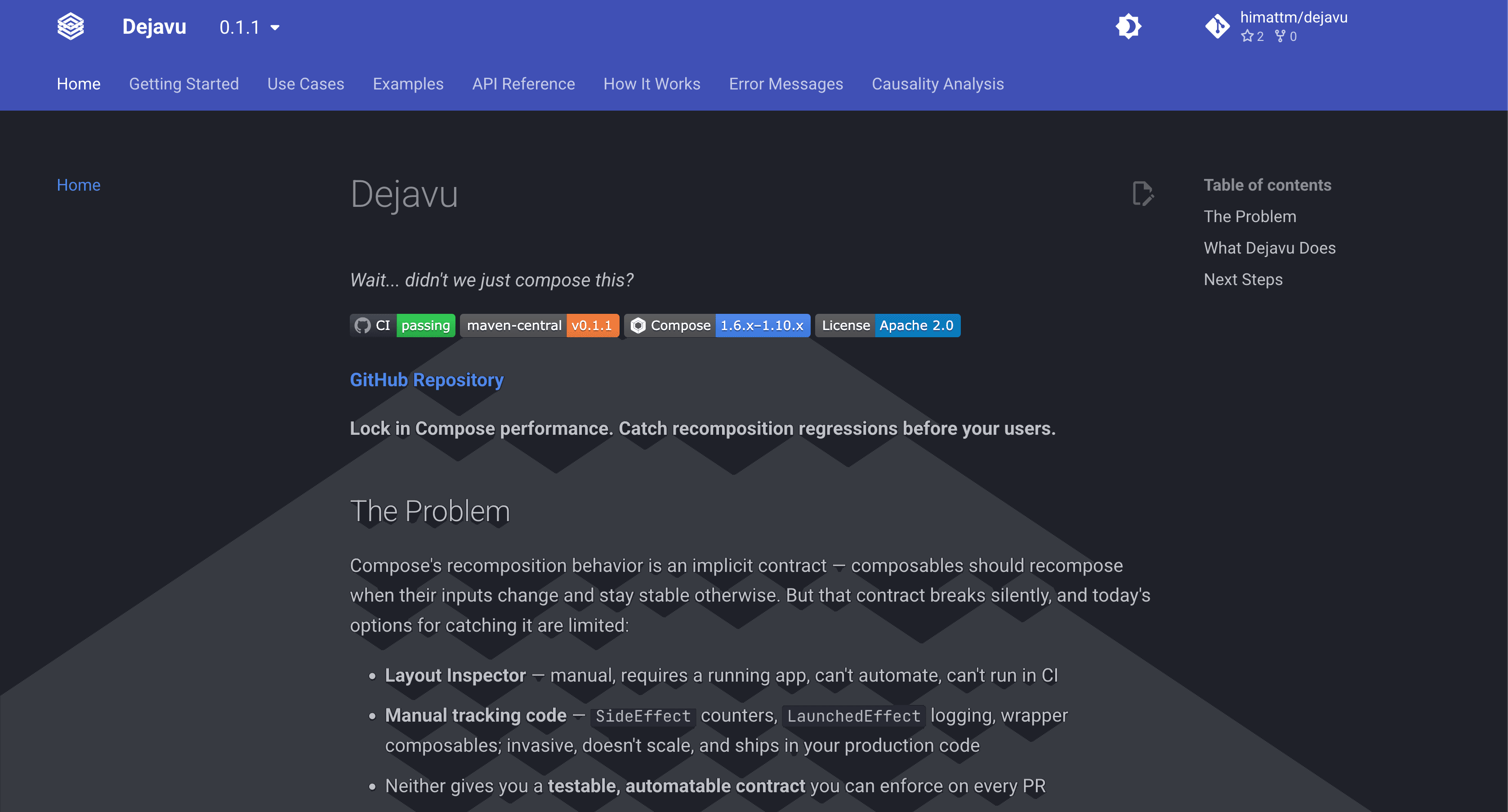

Why Model Context Protocol Works Better

Model Context Protocol (MCP) Tool calls don't go through the shell. The agent connects directly to an MCP server, and that connection stays alive until the Tool returns. There's no external timeout to worry about.

With a CLI, the agent spawns a shell process, the shell runs your command, and if the shell process takes too long, the agent kills it. With MCP, the agent calls a Tool and waits for the response. No shell, no timeout!

So I rewrote the queue as an MCP server. Same concept, FIFO queue, one build at a time, but the timeout problem goes away.

How It Works

Agent A calls run_task with a Gradle command. The MCP server queues it, runs it, returns the result. If Agent B calls the same Tool while A is running, B waits in the queue until A finishes. Both agents block on their Tool calls, but neither times out.

Here's what that looks like in practice:

| Time | Agent A | Agent B |

| 0:00 | Started build | |

| 0:02 | Building... | Entered queue, waiting |

| 3:12 | Completed (192.6s) | Started build |

| 3:45 | Completed (32.6s) |

Agent B's build only took 32 seconds because it didn't have to compete with Agent A. Gradle's daemon was warm, caches were populated, the machine was free.

Total time: 3:45. Without the queue, both builds fighting each other would've taken 10+ minutes, and my laptop would've been unusable.

The implementation is about 600 lines of Python. SQLite with Write-Ahead Logging (WAL) mode for the queue state. Process groups to clean up orphaned builds if an agent crashes. Output goes to log files to avoid eating up context window tokens.

If you're using Claude Code, you'll want to add instructions to your CLAUDE.md telling it to prefer the MCP Tool over the built-in Bash for build commands. Otherwise it'll just run Gradle directly and skip the queue. The README has the snippet that I use.

Try It Out!

If you're running multiple AI agents on the same machine and they're triggering expensive operations (builds, tests, docker commands) this might help. The agents serialize automatically instead of fighting over resources.

It's open source under Apache 2.0 and you can install it with uvx:

uvx agent-task-queue@latest

Works with most AI coding tools that support MCP. Check out the repo at github.com/block/agent-task-queue for setup instructions.

If you try it out, I'd love to hear how it goes. Open an issue on GitHub or reach out on Bluesky!